One of the main aims I’ve had with all my IoT projects was eventually to integrate them into Azure. One way that I found was via:

Connecting to Azure IoT hub

The limitation there is that it really only gets the telemetry into Azure. From Azure IoT hub you need to send it off to another application to get any real value.

What I wanted to achieve was to send that data into Azure but have it display some sort of result, like a graph, without me having to do anything too much low level work.

The solution was to use the Azure IoT Central service. So the project plan was to use what I learned in building an

Adafruit Huzzah Temperature sensor

but instead of simply displaying the results on the serial console to have the results sent ot Azure and displayed in a graph.

The starting point was:

Quickstart: Connect an ESPRESSIF ESP32-Azure IoT Kit to IoT Central

problem was that the hardware device they use in this project is now obsolete it appears:

https://au.mouser.com/ProductDetail/Espressif-Systems/ESP32-Azure-IoT-Kit?qs=PqoDHHvF64%252BuVX1eLQkvaQ%3D%3D

Instead, I decided to use a:

SparkFun Thing Plus – ESP32-S2 WROOM

The hope being that it would be close enough to what the original document wanted.

Also for guidance and source files I used:

Connecting ESPRESSIF ESP32 to Azure IoT Central using the Azure SDK for C Arduino library

You should start here:

Getting started with the ESPRESSIF ESP32 and Azure IoT Central with Azure SDK for C Arduino library

which will take you through setting up a new IoT Central Application, which I won’t repeat here. The result of that will be 3 items that will need to be included in the code for the device:

- ID scope

Device ID

Primary key

Next, you’ll need to download all the source files in the repo and include them in a new PlatformIO project. The files are:

- AzureIoT.cpp

AzureIoT.h

Azure_IoT_Central_ESP32.ino

Azure_IoT_PnP_Template.cpp

Azure_IoT_PnP_Template.h

iot_configs.h

I renamed Azure_IoT_Central_ESP32.ino to main.cpp in my project.

The next thing you’ll need to do is set your local wifi parameters in the file iot_configs.h. The settings should look like:

// Wifi

#define IOT_CONFIG_WIFI_SSID “<YOUR LOCAL SSID>”

#define IOT_CONFIG_WIFI_PASSWORD “<YOUR WIFI ACCESS POINT PASSWORD>”

Make sure you save any changes to the files you make.

In this same file also locate and the set the Azure IOT Central settings like:

// Azure IoT Central

#define DPS_ID_SCOPE “<ID SCOPE>”

#define IOT_CONFIG_DEVICE_ID “<DEVICE ID>”

// Use device key if not using certificates

#ifndef IOT_CONFIG_USE_X509_CERT

#define IOT_CONFIG_DEVICE_KEY “<PRIMARY KEY>”

which need to include the values obtained when configuring Azure IoT Central earlier.

If you now build your code and upload it to the device you should find that it will connect to your local wifi and start sending information to Azure IoT Central.

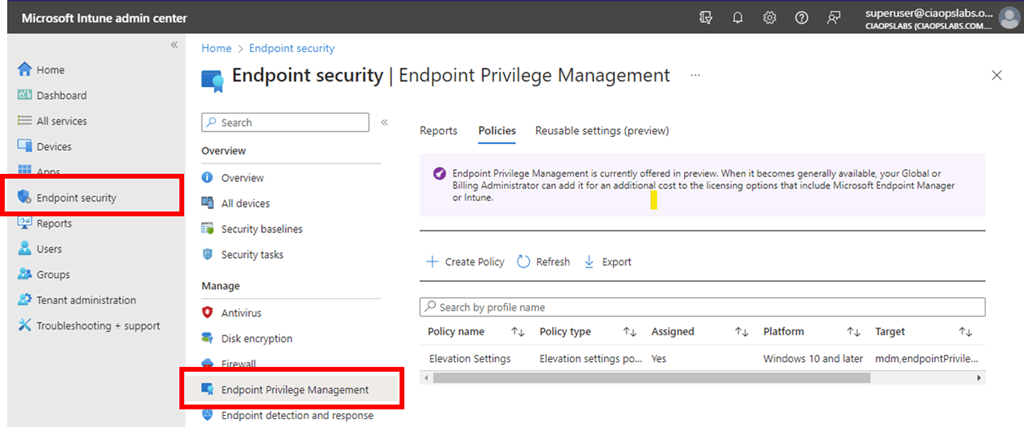

The device configured in Azure IoT Central should report as connected as shown above when you view this in the Azure IoT Central portal at:

https://apps.azureiotcentral.com/

If you then select the Raw Data menu item as shown above, you see the data from your device being received regularly into Azure.

If you look at the serial monitor connected to the device locally you should see something like the above indicating that data is being sent up to Azure.

This, therefore, now indicates that there is a correct connection to the Azure IoT Central portal. The problem is that the data being sent currently is actually just static dummy data that never changes. What I want to do is send actual data read from a temperature sensor connected to my device. So I need to find the source of the data in the code so I can replace that with the dynamic data from the tempreture sensor connected to my device I want.

Turns out the source of that dummy data is in the file Azure_IoT_PnP_Template.cpp around line 236:

What I now want to do is replace the static value of 21.0 for temperature and 88.0 for humidity with actual readings from the device.

To achieve that I’ll need the code from the previous project that read the temperature data which is here:

https://github.com/directorcia/Azure/blob/master/Iot/huzzah-tempsens.ino

I’m going to add that to a new file in my project called ciaath.cpp to keep my code separate from the templated Azure stuff. In there I’ll have 2 functions:

float ciaaht_getTemp() which returns temp.tempreture

float ciaaht_getHumidity() which returns humidity.relative_humidity

Remember, both temp and humidity are objects and all I want is the actual numeric value in there.

I’ll also create a ciaath.h file that looks like:

#ifndef CIAATH_H

#define CIAATH_H

void ciaaht_init();

float ciaaht_getTemp();

float ciaaht_getHumidity();

#endif

The idea is that this tells other pieces of code about these functions. You’ll also note I have a function ciaaht_init() to initialise the temperature sensor at start up.

Back in the Azure_IoT_PnP_Template.cpp file I need to include the line:

#include <ciaath.h>

to tell it about my functions in my ciaath.cpp file. I can now also change the lines that report the temperature and humidity from their original static value to the value read from the temperature senor connected to my device to be:

static float simulated_get_temperature() { return ciaaht_getTemp(); }

static float simulated_get_humidity() { return ciaaht_getHumidity(); }

which basically get the data from my device which will then be sent to Azure.

Back in main.cpp I need to add:

#include “ciaath.h”

to tell it about my custom functions. I also have to add around line 359:

ciaaht_init();

to initialise the temperature sensor on my device at startup.

Once this all compiles and uploads to the device I can again check Azure IoT Central portal and see in the Overview menu item

and I see my temperature and humidity are no longer a constant.

If I heat up the temperature senor connected to my device I see:

and if I leave it to return to normal I see:

I’ve put all the code up at:

https://github.com/directorcia/Azure/tree/master/Iot/ESP32-S2/IoT-Central

so you can have a look and use it if you need to.

I did need to get some help along the way, especially with the code and working out where the values uploaded to Azure came from initially as well as how to structure the .h files to make it cleaner. I’m no coder but hopefully my explanation here helps other non-coder, but let me know if I haven’t got it right as I really want to better understand all this.

I’m now super happy I have this working and I’m confident that I can use this as a base to start creating more powerful projects connected to Azure!